Blog

Putting Amazon’s Provisioned IOPs to the test

Last week Amazon released Provisioned IOPs and we were fortunate to get beta access to this new storage solution. And being good geeks we put PIOPS through its paces. Over the last few weeks we’ve run various tests against PIOPS and have the results of our performance tests below.

To date, standard EBS volumes have supported moderate or bursty I/O requirements. A problem encountered when running database workloads on standard EBS was inconsistent service times and variable performance, in other words not much protection against noisy neighbors in your multi-tenant infrastructure.RAIDing EBS volumes helped reduce that variability but never completely got rid of it. Amazon created Provisioned IOPs with this problem in mind. With Provisioned IOPs the goal was to create a reliable and consistent storage solution optimized for database workloads.

To make EBS a better solution for database workloads Amazon focused on improving throughput, service times and consistency of the service. This new EBS solution they are calling Provisioned IOPs. Provisioned IOPs has the following features.

- Dedicated IOPS: Now you can purchase EBS volumes with dedicated IOPS. For example if you want dedicated 500 IOPS per volume you can now provision a 100GB volume with 500IOPS. Or you can provision a 100GB volume with 1000IOPS. However you may not exceed a ratio of 1/10 for GB to IOPs. So a 100GB volume with 1000IOPS is allowed because the ratio is 1/10 but not a 50GB volume with 1000IOPs since that ratio would be 1/20.

- IOPS scale linearly: Want more IOPs than the 1000 for one volume? Then RAID multiple volumes and you can scale your IOPS up to 10,000IOPS with 10 x 1000IOPs volumes. Your limiting factor will be your NIC card since in the end EBS is a network attached storage technology.

- EBS optimized instances: One of the problems with EBS was that the NIC of your virtual instance was used for both EBS traffic and all your other traffic. So if you saturated your network link with other traffic your EBS volume performance suffered. Amazon has been fiddling under the hood and has now what it’s calling EBS optimized instances. Basically the network interfaces of the virtual instances now have some kind of QOS in place to ensure that EBS traffic from the virtual machine has dedicated bandwidth to EBS storage. Amazon is sketchy on details but stated that an m1.large has 500MBits/sec link and m1.xlarge and above has 1000MBits/sec. This means that on an m1.xlarge and above you could theoretically push up to 896Mbits/sec or 112MB/sec of sustained traffic to EBS.

So how do all these changes compare with standard EBS? We ran some performance tests to find out.

The Setup

We setup two servers one using EBS volumes and another PIOPS volumes. The PIOPS server was setup with both optimized EBS option and with 1000IOPs. The EBS volumes were set with the standard option and optimized EBS option was not set. Details are below.

Server Setup

Server Setup

OS: Ubuntu 12.04 LTS (64bit)

AMI: ami-82fa58eb

Amazon Region: US-EAST-1

Amazon instances tested: m1.large & m2.4xlarge

EBS volume setup

Standard EBS Volumes. 10 x 100GB

PIOPS volumes. 10 x 100GB 1000IOPs provisioned volumes.

Filesystem: XFS

RAID setup

RAID software:Lvm2

LVM version: 2.02.66(2) (2010-05-20)

Library version: 1.02.48 (2010-05-20)

Driver version: 4.22.0

RAID configuration

vcreate vg-ebs -n lvol0 -i10 –I64 -l 100%VG # Note the 64KB stripe size.

Testing software and configuration

Test software: Sysbench 4.12 # http://sysbench.sourceforge.net

Test configuration: Nine tests were run for each type of test-mode:

(seqwr, seqrd, rndrd, rndwr, rndrw)

#sysbench --test=fileio --file-total-size=32GB --file-test-mode=$TEST-MODE

--file-block-size=16K --max-time=300 --max-requests=10000000 --num-threads=16

--init-rng=on --file-num=16 --file-extra-flags=direct --file-fsync-freq=0 run

Test Results

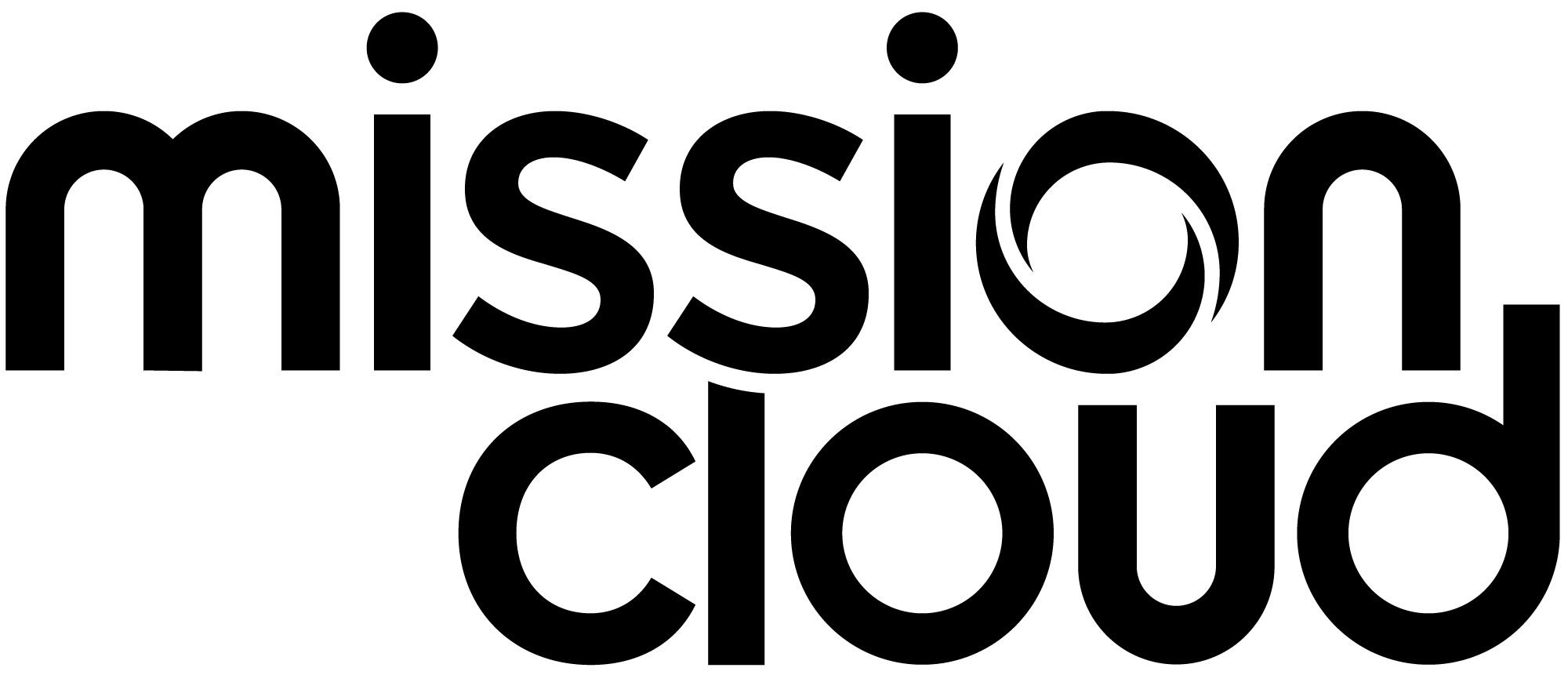

IOPS (transactions/sec)

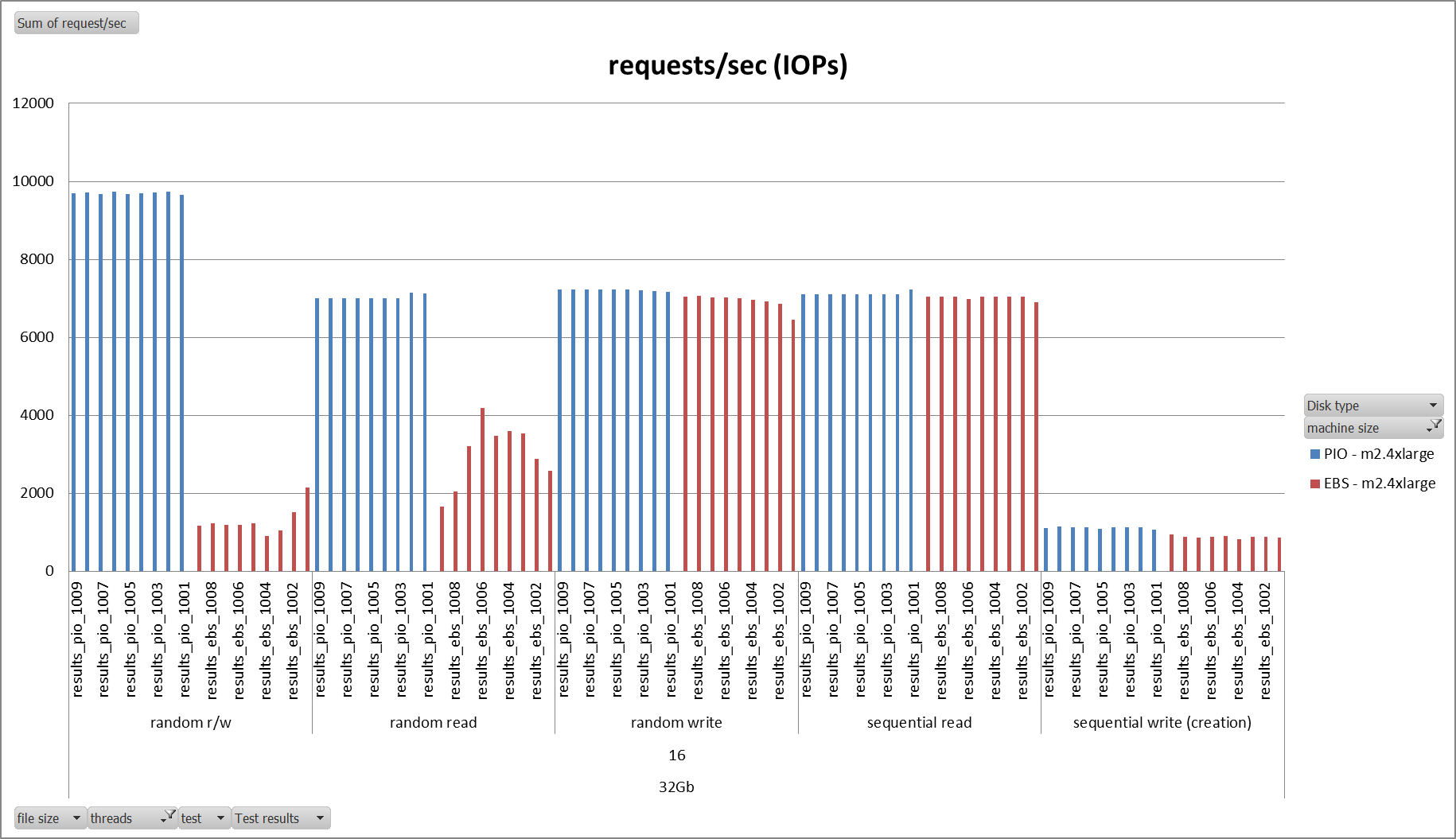

Based upon our nine tests the PIOPS volumes performed better on random reads/writes and random reads on both m1.large and m2.4xlarge servers. For random writes, sequential reads, and sequential writes PIOPS and EBS performed approximately the same. Of note is that on the m2.4xlarge the server was able to push almost 10K IOPS which is what Amazon has advertised with their new PIOPS service. In other tests we were able to push up random r/w up to 6000 IOPs on an m1.large instance.

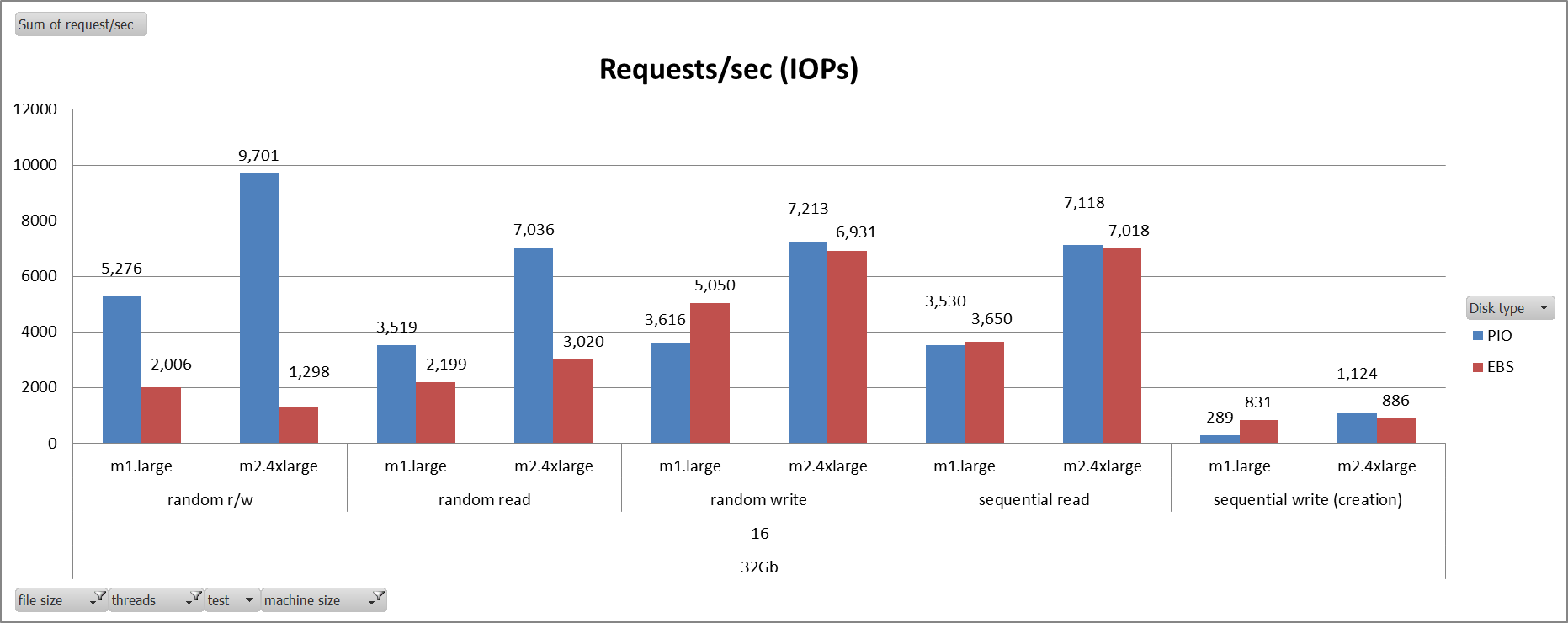

Throughput (MB/sec)

The throughput graphs show the same results as the IOPS results except that the units are now MB/sec. If you multiply the number of IOPs with the block size if 16K you get this graph. As you can see the MB/sec of the m1.large is about 80MB/sec. Considering the throughput of an m1.large is caped at 500Mbits/sec or 62.5MB/sec we can see that the on the m1.large reads and writes they cap out at about 55MB/sec, which is about 88% utilization. The other 12% should be TCP, OS and application overhead. On the M2.4xlarge that bandwidth cap is raised to 1000Mbits/sec or 125MB/sec and we see that the throughput of random reads and random writes jump to 109 and 112MB/sec respectively. Again this accounts for about 87% and 89% utilization on the respective bandwidth caps. This shows that the performance of the PIOPS volumes when using EBS optimized volumes is bound by the throughput limits of the EBS-optimized instances.

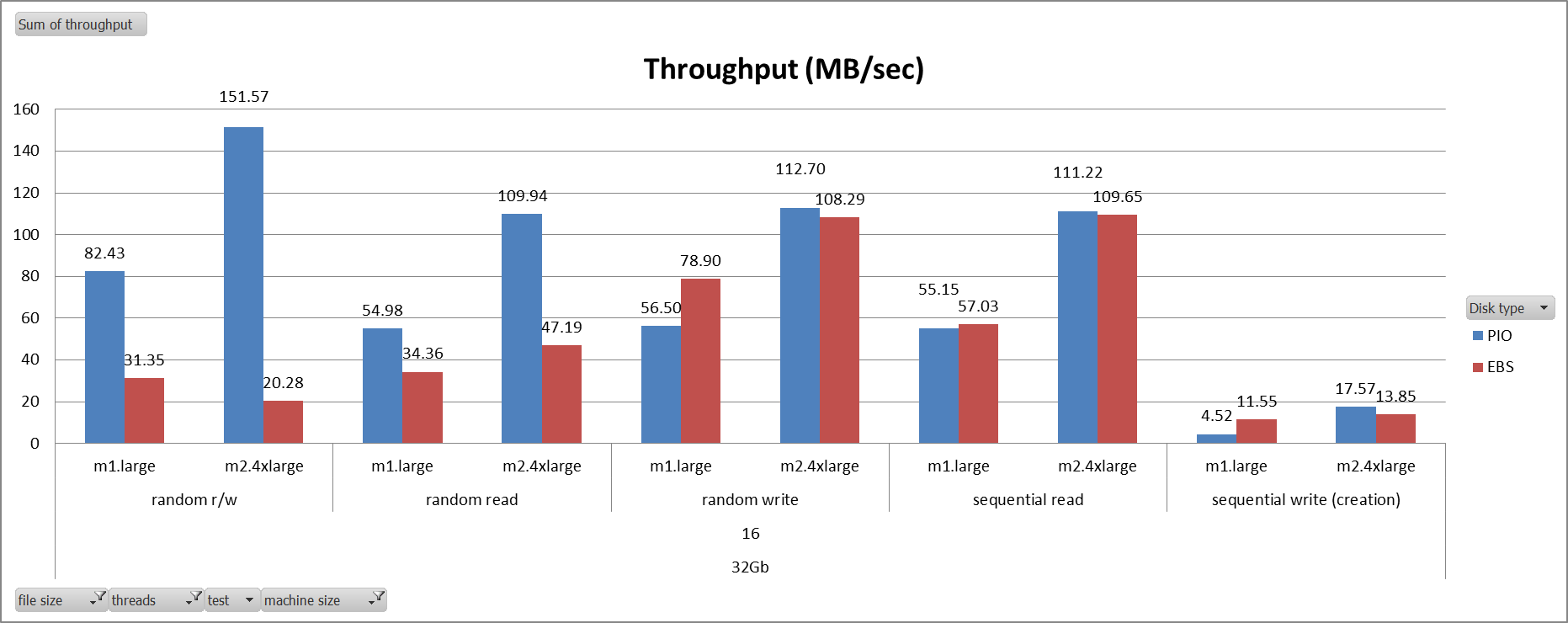

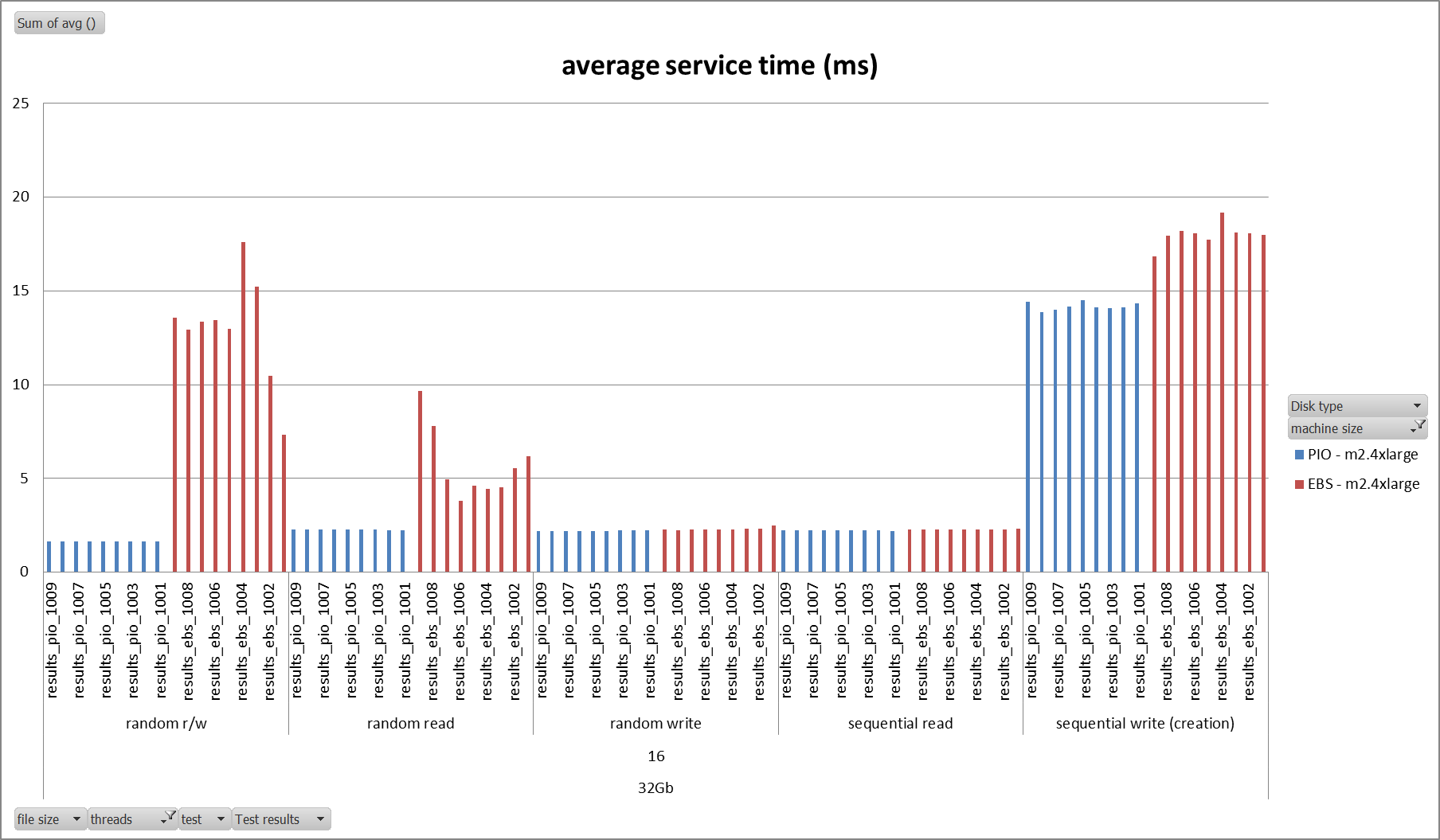

Service time (msec)

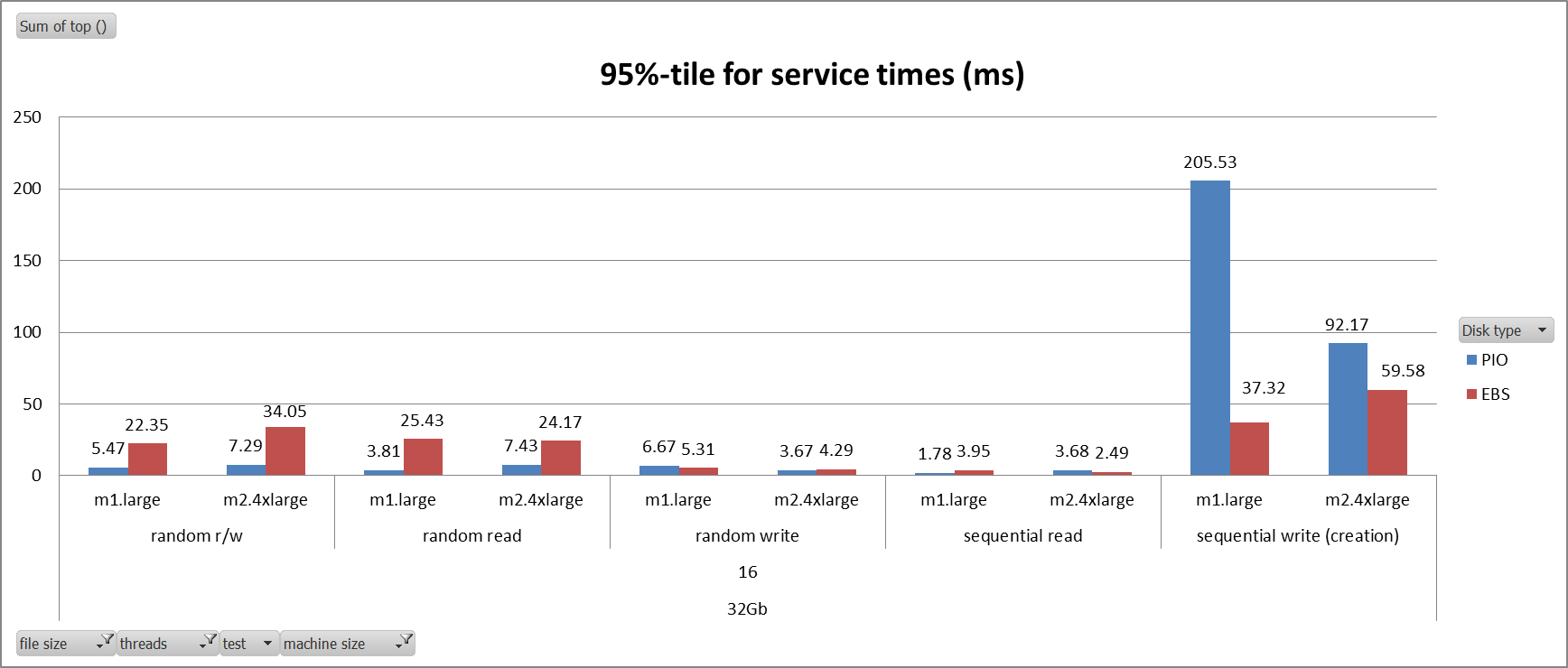

Looking at the service time averages of all the tests we can see that PIOPS volumes performed on par with or better than EBS volumes across the board. One outlier is the m1.large sequential writes on PIOPS. Looking at the individual results (shown below) the service time was consistent across all the tests, so not sure if this is a transient error or something else.

Brendan Gregg in the comments below asked for the 95%-tile of the results. They are in the chart below.

Performance consistency

One benefit of PIOPS is the consistency of the performance. Notice the chart below measuring IOPs. Notice the performance of PIOPS was the same across each of the nine tests for all the different workloads, where with EBS the performance of random r/w and random read fluctuates wildly between different tests. This is due to the read performance being impacted by noisy neighbors in the multi-tenant environment. Amazon fixed this problem by limiting IOPs per volume and implementing dedicated bandwidth for EBS with their EBS optimized instances. This graph illustrates this nicely.

The next chart also shows how these features improve service time consistency for PIOPS volumes as well. Notice how PIOPS’s service times are practically the same over each run, where the EBS volumes are all over the map for random r/w and random reads.

Conclusion

Provisioned IOPs was designed to address the limitations of EBS, the largest of which was performance inconsistencies due to multi-tenancy issues. Amazon has attempted to address those issues by implementing dedicated IOPs per volume and EBS optimized instances. These two features based upon our testing seem to have hit the mark to improve EBS performance especially for database workloads.

Our tests show dramatic improvements on the database workloads when compared to standard EBS volumes. On average we saw a 2.6 times improvement on random R/W IOPs performance on m1.large instances and a respectable 7.5 times improvement on an m2.4xlarge instance improvement over standard EBS volumes (*however I admit the performance of the standard EBS volumes was pretty bad during these tests. Additional tests are warranted). Random reads on the PIOPS volumes saw an improvement of 1.6 times for m1.large and 2.3 times improvement on an m2.4xlarge instance. Service times on PIOPS volumes improved by a factor of 2.7 and 7.9 times on m1.large and m2.4xlarge respectively for random read/writes. For Random Reads PIOPS was 1.7 and 2.5 times faster for m1.large and m2.4xlarge.

Based upon our tests PIOPS definitely provides much needed and much sought after performance improvements over standard EBS volumes. I’m glad to see that Amazon has heeded the calls of its customers and developed a persistent storage solution optimized for database workloads.

We are in the process of preparing mysql tests using sysbench’s oltp option. We will post those results soon.

Author Spotlight:

Jeremy Przygode

Keep Up To Date With AWS News

Stay up to date with the latest AWS services, latest architecture, cloud-native solutions and more.